]]>

So you've set your sights on a staff-plus title, or you're looking to grow in your role. How do you proceed?

This post originally appeared as a guest article on LeadDev.com titled “How to Expand Your Scope as a Staff Engineer”.

You’ve been a solid senior engineering lead for the past several years at your current company. You’re well respected among your teammates and have a solid track record of shipping impactful products and features. However, you can’t help but shake the feeling that you’re stuck in your career growth and that your prospects are limited where you stand.

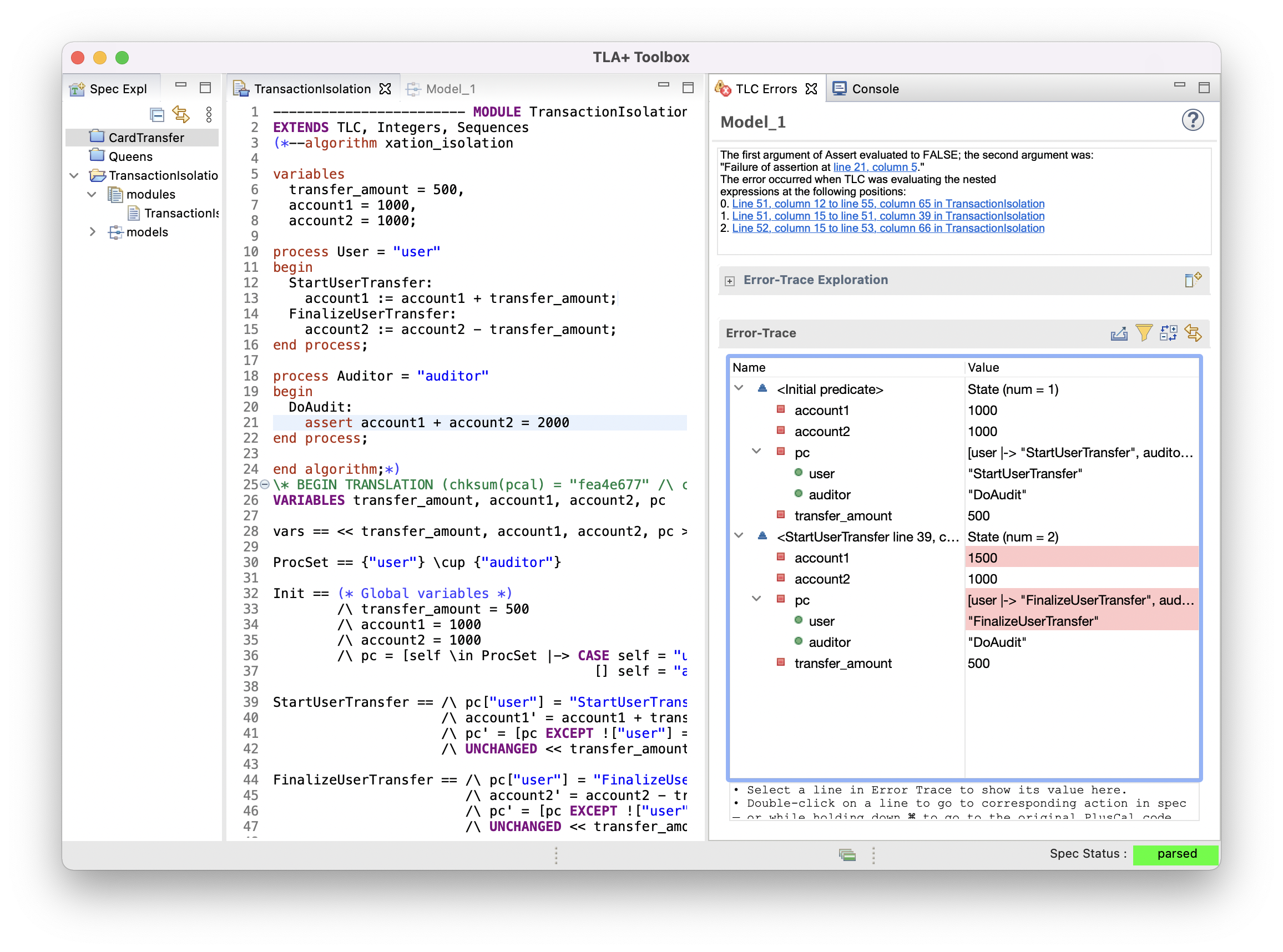

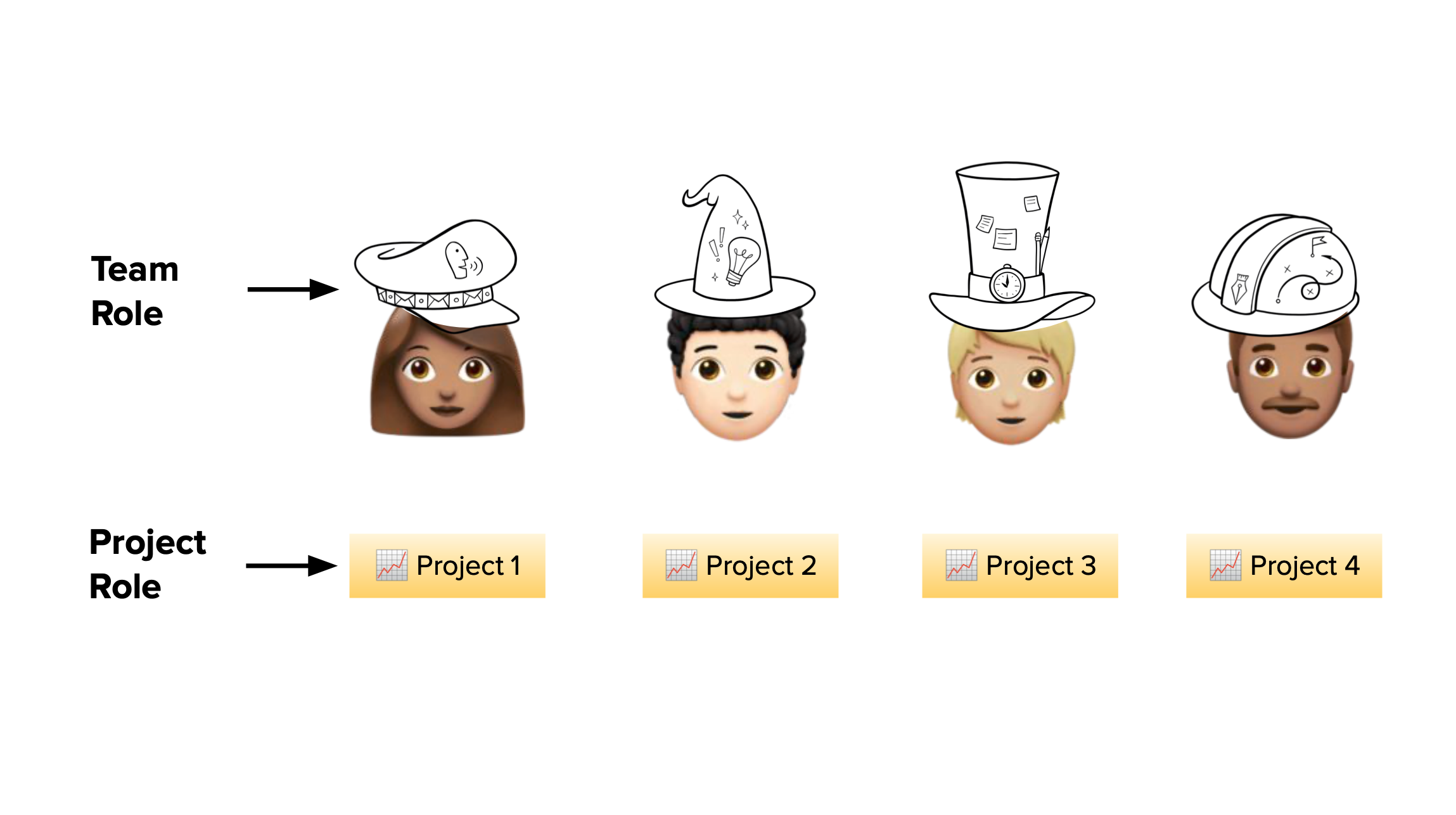

Your mandate as a staff engineer is to have a deep impact across multiple teams and the organization, but the road to get there is unclear. Maybe your position on your current team limits the types of projects you can execute, or your manager is too busy to help you grow. Perhaps you’re a new staff hire, and struggling to navigate the landscape of the organization and looking for the most impactful places to operate. Or you may be experiencing the opposite problem: underwater with a flood of small projects that aren’t really large enough to get you where you want to be.

These scenarios all share a common thread - the scope and influence you currently hold is not large enough to tackle the deep, cross-cutting projects you want to lead as your career advances. Let’s discuss how you can get there!

Why build influence?

For some of us, the thought of growing our influence may conjure up bad experiences at dysfunctional organizations, where kingdom-building and power games were the norm. For others of us, growing influence feels like a zero-sum game: To grow my scope, I need to be taking it away from someone else. And for some of us, we experience icky feelings of dread that we’ll need to cozy up to people in authority. Building influence or scope feels intimidating, aggressive, or unnatural to our collaborative instincts.

On the other hand, we know that we can’t just sit on our hands. In an ideal world, we want to believe that if we just quietly do the work, we’ll naturally get noticed and people will give us credit. In this ideal world, people would step aside to create opportunities for us when we’re deemed ready. Unfortunately, good work is not always noticed, and your internal ambitions are not always recognized.

The good news is that there is a third way - a way where you can be responsible for your own growth and trajectory, without the power games. Growing your influence can be done by naturally leaning into collaboration – here’s how.

Build your network - and let your ambitions be known

At this point in your career, it is a given that your technical skills are strong. They have served you well up to this point, but they will not (usually) be your primary means of growth in this phase of your career. Instead, it’s your relationships and connections that will serve as the catalyst for your growth.

Why build a network?

As a Staff engineer, your responsibility is to understand what’s going on at all levels of the organization, linking leadership strategy to what’s happening on the ground. To that end, you’re going to need relationships and touch points that can give you insight into what other teams are doing. You’re going to need to meet people outside of your circle that can help you see the other parts of the organization that you’re not seeing - and fill out the context you’ve been missing.

Not only that, you’re building out relationships with other teams that you can informally lean on if you need a favor done - or provide help to someone else when they need something from you.

The word network is no doubt loaded with notions of clammy hands, awkward small talk and unwanted inbound LinkedIn messages. Once again - it doesn’t have to be this way! Instead, consider a few updated ideas for the modern, remote work world.

Who should I be talking to?

There will be people that are immediately obvious to connect with. For example, you may want to set up recurring 1:1 syncs with leads on adjacent teams in your immediate group. In these syncs, consider filling each other in on your team roadmaps, common challenges you face. Some of these conversations may be fertile ground to identify problems that can be solved.

Other people worth connecting with may be peers in adjacent organizations - for example, engineers on platform teams may want to reach out to leads on product teams that are customers. You may want to consider networking with peers who share the same function as you (iOS/Android, frontend, data science, etc). Ask them what challenges they face, and compare notes on any gaps or opportunities you might see to be filled in your respective roles.

Finally, you may want to schedule time with your skip-level manager or a member of the leadership team. Consider asking them questions about the state of the organization, what challenges they face, and what their top priorities are (see Will Larson’s excellent blog post “Staying aligned with authority”).

Let your ambitions be known

Many of us aren’t comfortable revealing our career ambitions to others. However, holding back on conversations with your manager or a more senior sponsor along the lines of “I want to grow my scope so I can get a title at the next level” or “I’d like to have a greater role on Project X” will limit their ability to help you. Leaders in organizational authority roles are in the room where decisions are made - and you want them to be aware of your goals so they can position you for that new project or initiative that could help your career break out.

“People who are in the more senior role that you want also have their own goals and career aspirations. While it can be intimidating to ask someone ‘How do I get your job?’, remember that they probably don’t want to hold onto that role forever,” advises Ashley Kasim, a Staff Engineer at Lyft. “Uplifting me is a part of their journey to get to where they want to go too. Now that I’m in my current role, I’m also trying to grow my replacement. It’s about mutual benefit.”

Genuinely listen and offer help

It’s a bit cliche to say, but Dale Carnegie’s advice from How to Win Friends and Influence People still stands today: the best way to build your influence is to freely offer your genuine self. Offer to do a favor for a team that’s feeling crunched. Share your time as a mentor or sponsor for someone who needs it. Celebrate and elevate the wins of teams around you. Make sure you’re really listening to them as they share their wins and their struggles. Remember the details of what they share - from teammates’ names, to the particular challenges they face on specific projects. Do this freely, with no strings attached. Come crunch time, you may be surprised at how easily many will return the favor.

In today’s remote-work environment, it’s important to remember to make personal connections with people. It is too easy to start meetings by diving straight into business while forgetting to connect with the human behind the face on the screen. Personally, I treasure the chance to make small talk. I love learning about people’s vacation plans, or taking a few minutes to rabbit hole in a shared interest, or sharing a laugh over a funny story heard the other day. This breaks the monotony of back-to-back calls and also opens an opportunity for camaraderie, levity and connection. Ultimately, these little actions build trust - the raw currency you need for effective operation at your level.

Find the right problems and opportunities

As you build your network, you’ll also want to identify potential problem spaces that may be opportunities for your growth. Here’s a few ideas.

Connect the dots

In addition to your 1:1s, position yourself to receive information pushes from different parts of the organization. Join Slack chat rooms of other teams, where you can get a pulse for project status or updates. Add yourself to email distributions where you can receive project updates asynchronously. Attend an all-hands meeting for an entirely different group.

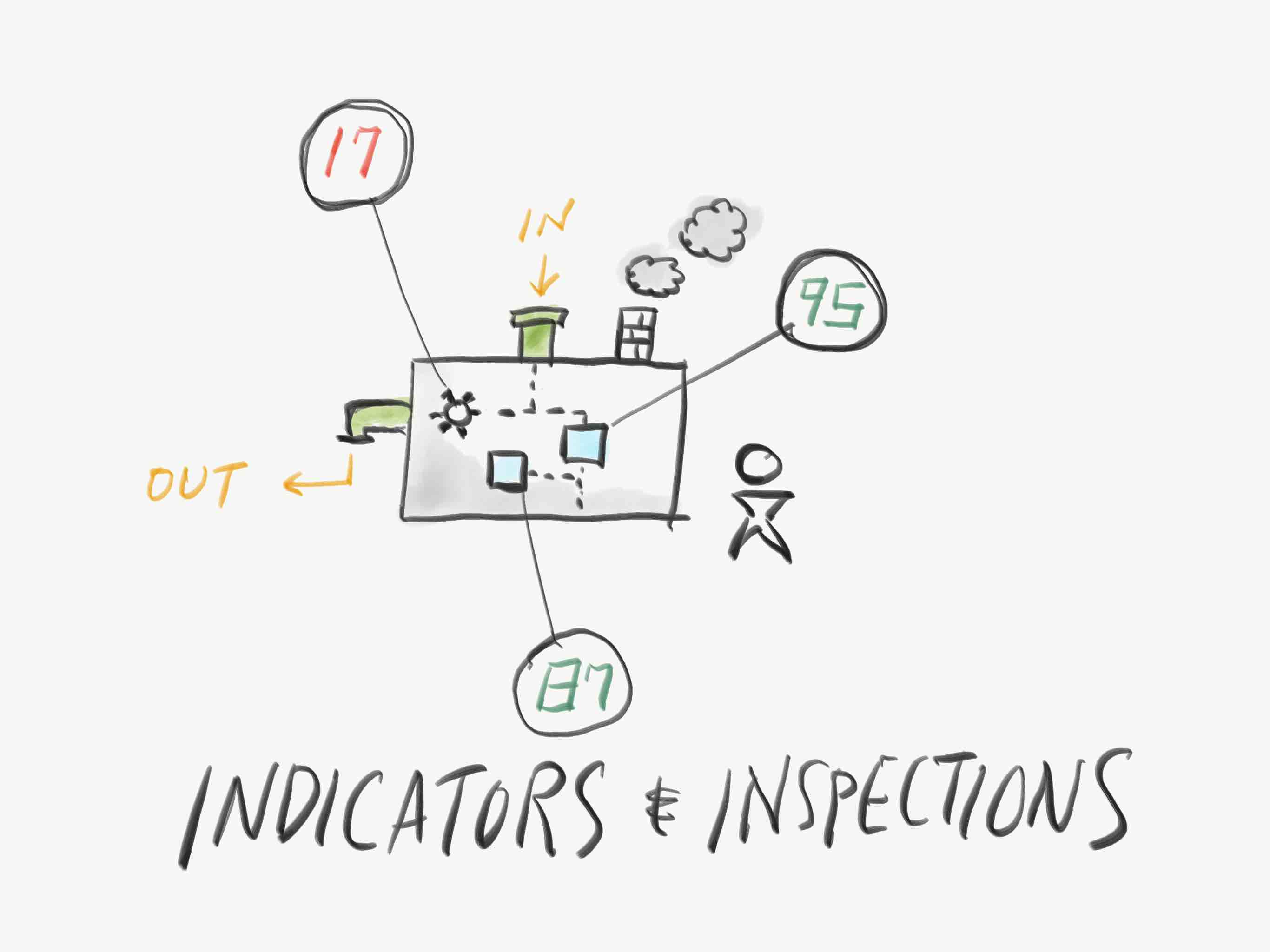

By being everywhere, you may be able to connect the dots on changing product strategy in another group that is upstream from yours, or jump on a new platform that has collaboration potential for you. You are now at a unique place to be able to connect the dots about problems or opportunities across the company:

- CI frustrations? There might be an org-wide gap in platform tooling.

- Patterns and cycles of rework? There might be a common stakeholder you share that may be contributing to rework

- Duplicate systems doing identical tasks? There may be an opportunity to create a shared platform.

- Big wins or ships from other orgs? Get in touch with adjacent teams to learn from their process, or compare notes from similar projects.

- Pivoting product strategy in a different line of business? This may give you advance notice to organizational or product changes that may ripple into your org.

Your job is to synthesize that kind of information and use it to create new innovation opportunities for yourself and your team.

Look at the seams

Opportunities can often be found in the seams where one team ends and one team begins. For example,

- If your team is responsible for one part of the user experience and then hands it off to another team to handle another part of the experience, is there friction in the handoff?

- If one team depends on another, are the interfaces between system responsibilities well-designed? Are SLOs in place to provide targets for system reliability?

- If a neighboring team works in a shared system, how is the quality or health of the shared platform? Are there opportunities to lead initiatives to improve quality, encapsulation, or abstraction?

Spread your impact by leading with your strengths

Another way to come up with impactful projects is to do an assessment of your personal strengths and follow them to see if there are opportunities within the company. Maybe you’re a gifted teacher and coach, or you’re a deeply technical data scientist in your team domain. Now take a completely different axis of the business and imagine what it would look like to offer your leadership there.

- As a gifted teacher, how might you level up the testing culture of your fellow engineers - through workshops or starting a community of practice?

- As a technical expert in your domain, how might you help an entirely different business function - marketing, legal, or finance - understand and deepen their fluency in your expert area?

- As a mobile engineer, how might you build a skills library for server or frontend engineers who might want to contribute to your codebase?

- As an accessibility advocate, how might you leverage your expertise to advocate at a company wide level?

Be wise about the projects you choose - and collaborative in your style

By now, you no doubt have a large list of potential opportunities or projects to tackle. But wait - you don’t just get to tackle them all at once, you need to be strategic about what to advocate for and how to build the case to get the green light.

Align to your org’s scope

More likely than not, you’ll have more than enough opportunities to start up new projects, or contribute to high impact opportunities. However, it’s important to make sure those opportunities are the ones that are aligned with the goals of your organization.

Are you on a product team, but you see a glaring need for an infrastructure improvement? Instead of offering to build a grand, universal solution for the whole company, you may consider building out a local proof of concept for your team - then work with the platform team to integrate your work with theirs.

A good question to ask yourself is - _if I take on this project, does my organization or group move faster? _Some of the reasons for this are pragmatic to your career development - your peers and team leadership are the ones who will be validating your work, and arguably the ones who know you the best. Other reasons are pure Conway’s Law concerns - you will succeed most when you are working with the systems and the teams you’re most familiar with.

However, don’t be afraid to push the boundaries of your org scope. After all, it’s at the seams that opportunities can be found!

Lead with a collaborative style

When advocating for expanding your scope, you want to create buy-in. I prefer a style of collaboration where we lead with the mindset of solving together. Let’s imagine a situation where you might be advocating for a solution that moves into another team’s domain. You might try the following:

- Build rapport and empathy by meeting 1:1 with the tech lead and hearing about their problems. Use this time to align with their roadmaps to identify the top OKRs and projects on their minds.

- Offer up a proposal: “I’ve been hearing about this problem on your end for some time and I wrote up a draft proposal for something that might help and be a win for both of us - would you mind reading it and telling me what you think?”

- Frame the discussion as a mutual win - if you or your team take on this new initiative or scope, how would it benefit both your teams? How would it move the needle on their metrics?

When proposing increasing scope, you should be ready to receive a polite rejection. Be aware that if you move into someone else’s scope, you will almost certainly be creating more work for them - therefore, make sure that the thing you offer is a clear win for the other side. It’s completely normal - and that gives you the green light to move on to your next project idea!

A few plays

Finally, the tactical part of the picture. Building scope may involve running a few “plays”, or actions, that help you build the scope you are looking for.

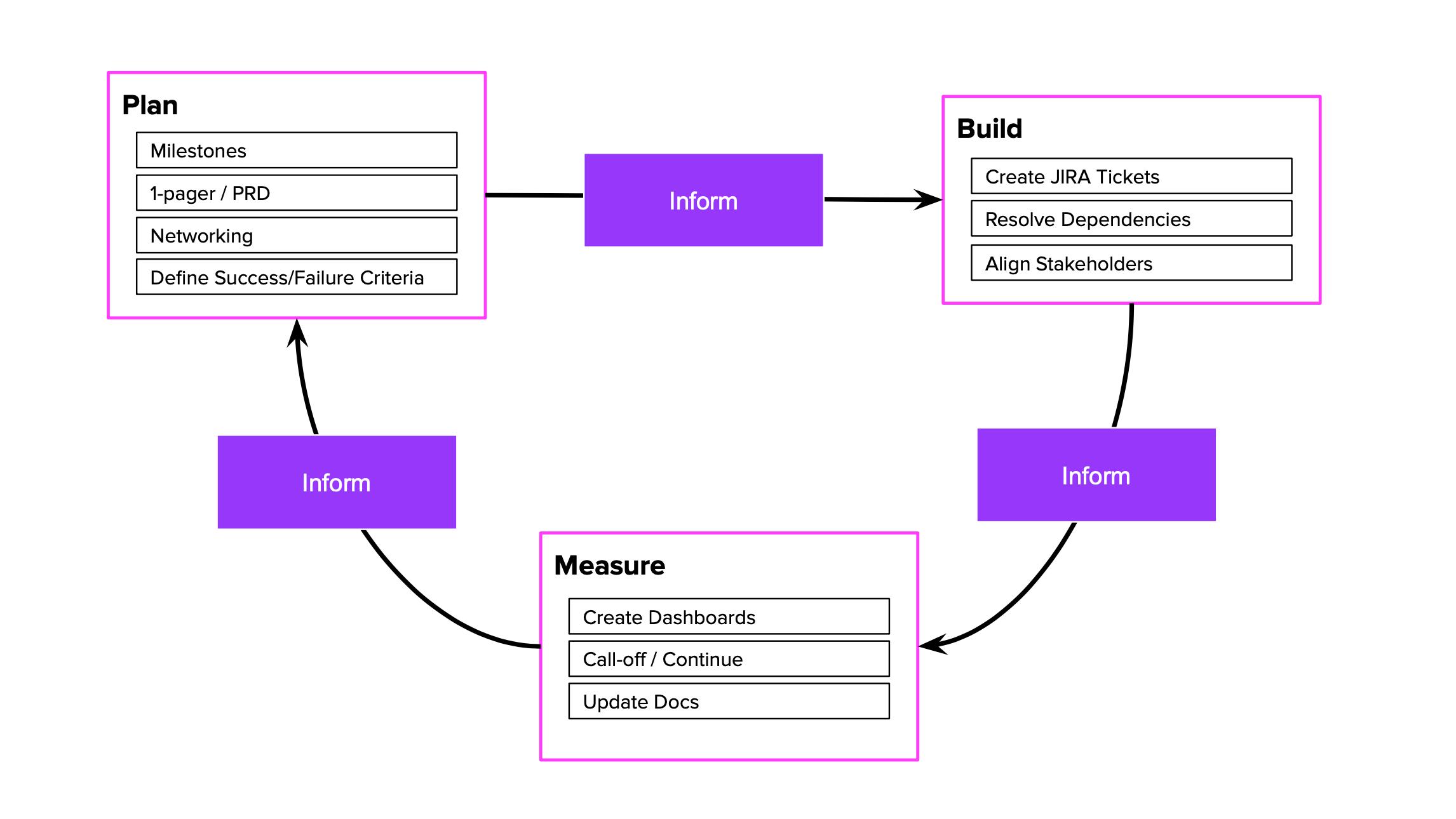

Lead (and land) an important initiative for your team(s)

In my experience, this is the most common sign of operating at a Staff-plus level - running a multi-team project that ships something complex and important for the larger group. You will want to work with your manager and your network to get on a project that has a surface area at the organization-wide level. These types of projects tend to have multiple phases, require buy-in from teams across the org, and have visible impact to OKRs for the group. As a lead, you will want to be at a level where you are delegating to the team and helping unblock or clarify project timelines, dependencies, and status outward to relevant stakeholders.

It’s important to not be coding in the critical path and end up neglecting your responsibilities around product leadership, technical guidance and helping the team make crucial architecture decisions. That’s why delegation will be your superpower here.

Take over a leadership role for a project outside your team

Your manager or a peer in an adjacent org may inform you of a project that is seeking additional help or leadership due to circumstances that are risking its delivery. Consider joining the project as a player-coach - as you help steady the delivery of the project, you’ll be building domain knowledge outside your immediate team and leveling up coworkers on other teams. This domain knowledge - and the relationships you build outside your world - will help you expand your reputation.

Have you built a solution that solves a problem for other teams, such as a machine learning model, a UI library, or an API? You may want to mature this offering into a general solution for other teams to digest and consume. Much has been written on this topic, but in general you will want to try to solicit concrete use cases from one or two early adopters, who you can use to gradually evolve your system from a bespoke solution to an extensible platform.

Advance a competency across functional areas

Is there a skill gap that you see across a functional role? Maybe there’s an opportunity to upskill engineers by leading workshops or starting a community of practice, whether that be around clean coding practices, testability, performance, a new tool, software language, or framework. The best thing is that you don’t have to be an expert to accomplish this - you can pitch this as a way of learning and upskilling together, and you can take the lead in assembling the team.

Improve a process or capability across the company

Perhaps there are ways to improve ways of working by tweaking an agile practice or process that has long stopped working for people. You may notice a gap in how you conduct hiring reviews, or see a need to implement an architecture review process. You may champion new programs, such as starting a hiring pipeline from nontraditional career backgrounds. As is the case with organizational change, make sure you have clear buy-in and support from leadership and other stakeholders before proceeding.

Putting it all together

It’s tempting to start from the tactics and think that Staff+ career advancement just means executing and shipping bigger projects. But the reality is,

- If we enter a new project without the right relationships and trust with our coworkers, then our actions may be viewed with suspicion or indifference.

- If we aren’t aligned with information flows (both official and backchannel), then we may miss out on impactful opportunities to intervene.

- If we start a project that has too small a scope, doesn’t solve a problem or lacks actual use cases, then it doesn’t have sufficient outward visibility and impact on the organization.

- If we get on a project that doesn’t align with our organizational scope, then we will be doing work that is not visible or rewarded by the organization we belong to.

- If we are the ones doing all the heavy lifting and taking all the interesting IC work, then we miss opportunities to grow our teammates by giving them the meaty work. Additionally, we limit the effectiveness of our teams.

Over to you

Take some time to follow some of the prompts listed above and do your own introspective work. Is your manager or sponsor aware of your ambition? With whom do you have relationship with within the organization - and where might you need to be? Where might you plug in to receive information flows? How can you be helpful to others around you?

Growing your network, influence and scope is like nurturing a garden - your progress is hidden for a long time while the roots form underground. However, there will come a day when you get to reap the fruits. Take your time, have patience, be kind, and stay strategic. You’ll soon be going places!

]]>